The internet was on fire this week talking about ChatGPT – an interactive artificial intelligence application from OpenAI. The application accepts text-based prompts and responds in a conversational way.

And it does feel very human! From their website:

The dialogue format makes it possible for ChatGPT to answer followup questions, admit its mistakes, challenge incorrect premises, and reject inappropriate requests.

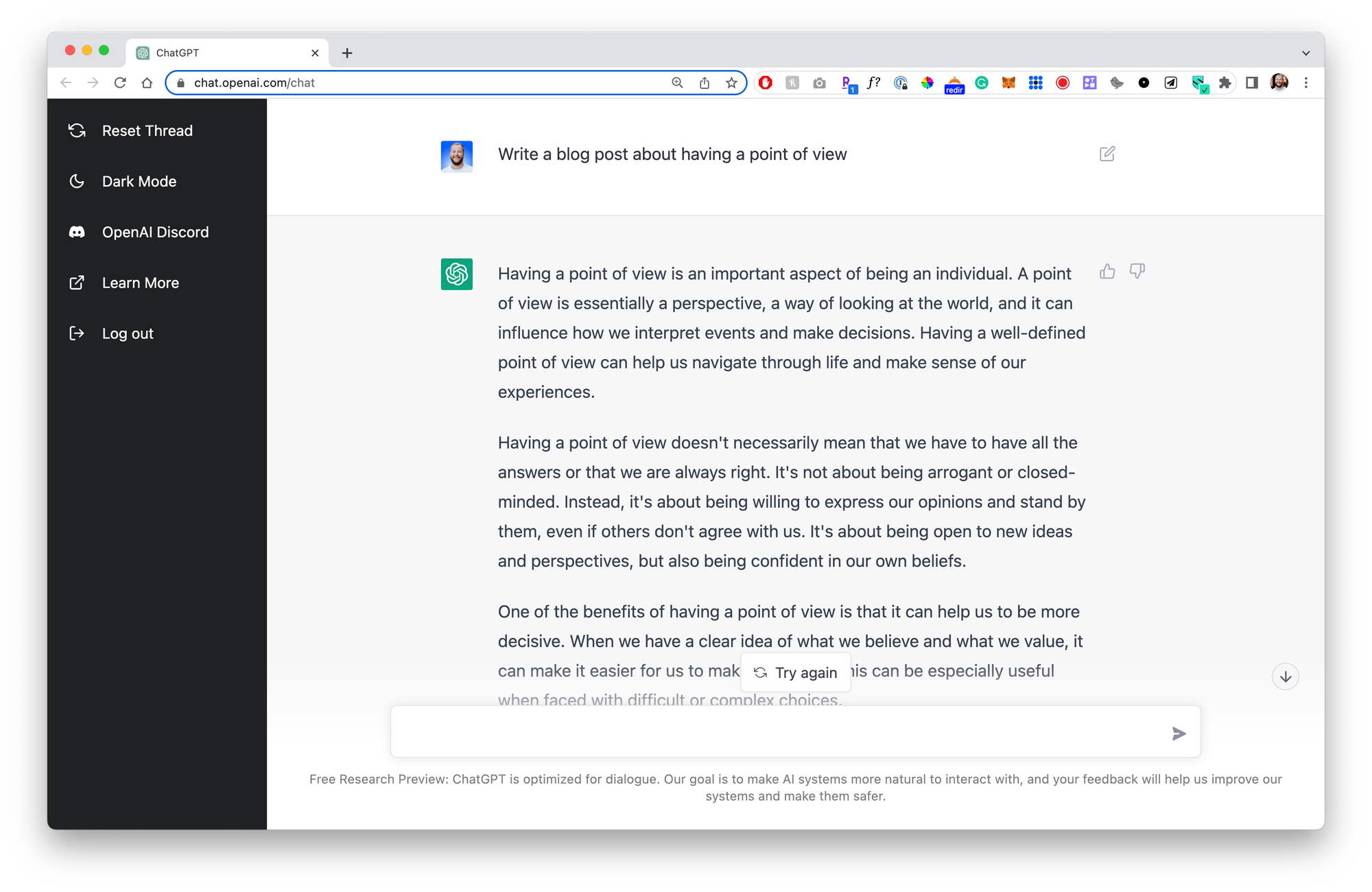

It's super easy to use and genuinely very impressive. I asked it to create a blog post on having a point of view and it generated a 6-paragraph essay in less than 10 seconds:

The internet had a lot of fun with this tool, finding all kinds of creative examples of how ChatGPT can create responses for prompts like:

- Writing a sketch for SNL

- Fixing bugs in code

- Writing a scene from Seinfeld

- Even creating art prompts for Midjourney (another AI tool):

OK so @OpenAI's new #ChatGPT can basically just generate #AIart prompts. I asked a one-line question, and typed the answers verbatim straight into MidJourney and boom. Times are getting weird...🤯 pic.twitter.com/sYwdscUxxf

— Guy Parsons (@GuyP) November 30, 2022

If you want to dive into the rabbit hole, here's a good collection of discoveries.

As a result, there were also a LOT of people calling this the beginning of the end of content creation or even a major threat to Google:

If ChatGPT replaces Google most of the websites that get visitors through content are going to die.

— Andrea Bosoni (@theandreboso) December 2, 2022

And I get it! This can create really well-written, logical, accurate content and seemingly has no limits to how specific you can be.

If you're curious about how this technology works, here's a critical passage from that same blog post I mentioned above:

ChatGPT is fine-tuned from a model in the GPT-3.5 series, which finished training in early 2022. You can learn more about the 3.5 series here. ChatGPT and GPT 3.5 were trained on an Azure AI supercomputing infrastructure.

That will start you down the rabbit hole. To be honest, I haven't gone super deep down this rabbit hole myself. My (basic) understanding is that these "models" are created by feeding a ton of different information (including hundreds of thousands of Wikipedia articles). The model is then tweaked on how to understand, relate that information to itself, and translate it into natural human language.

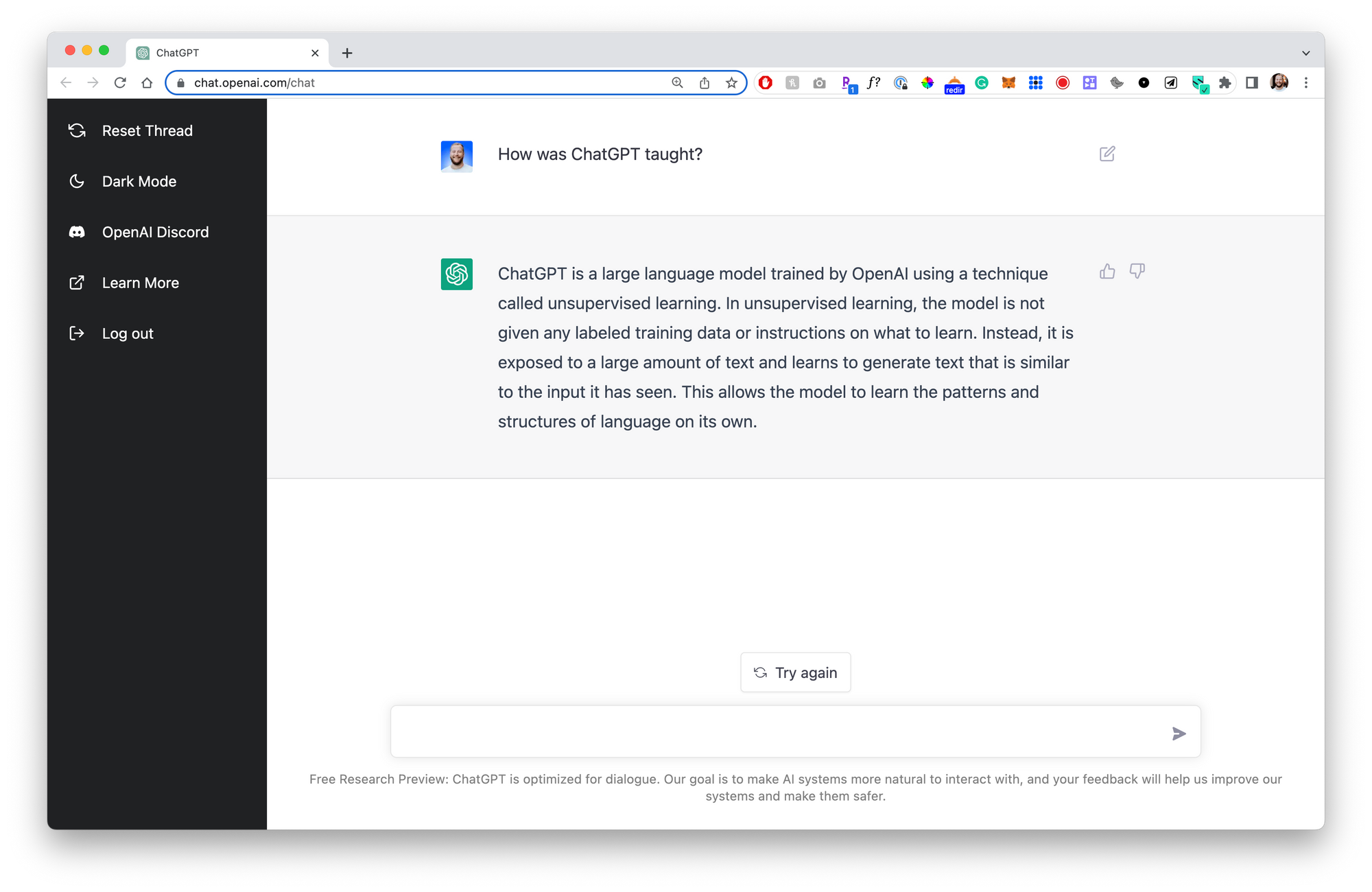

Actually, screw it, let's just ask ChatGPT how it works:

ChatGPT is a large language model trained by OpenAI using a technique called unsupervised learning. In unsupervised learning, the model is not given any labeled training data or instructions on what to learn. Instead, it is exposed to a large amount of text and learns to generate text that is similar to the input it has seen. This allows the model to learn the patterns and structures of language on its own.

OK – that's enough of how we got here...

Let's talk about what happens next and how that impacts creators (especially creator-educators).

Will this become our personal learning interface? An always-on personal assistant who can answer virtually any question nearly instantly?

It's possible that if the model is continued to be fed new, current information, it becomes a "super brain" of all known (and shared) information.

But, my understanding is that this is in fact limited to information that has been shared and ingested. Even still, it would seem logical to me that the process of teaching the model gets faster and faster, eventually receiving updates in near real-time...

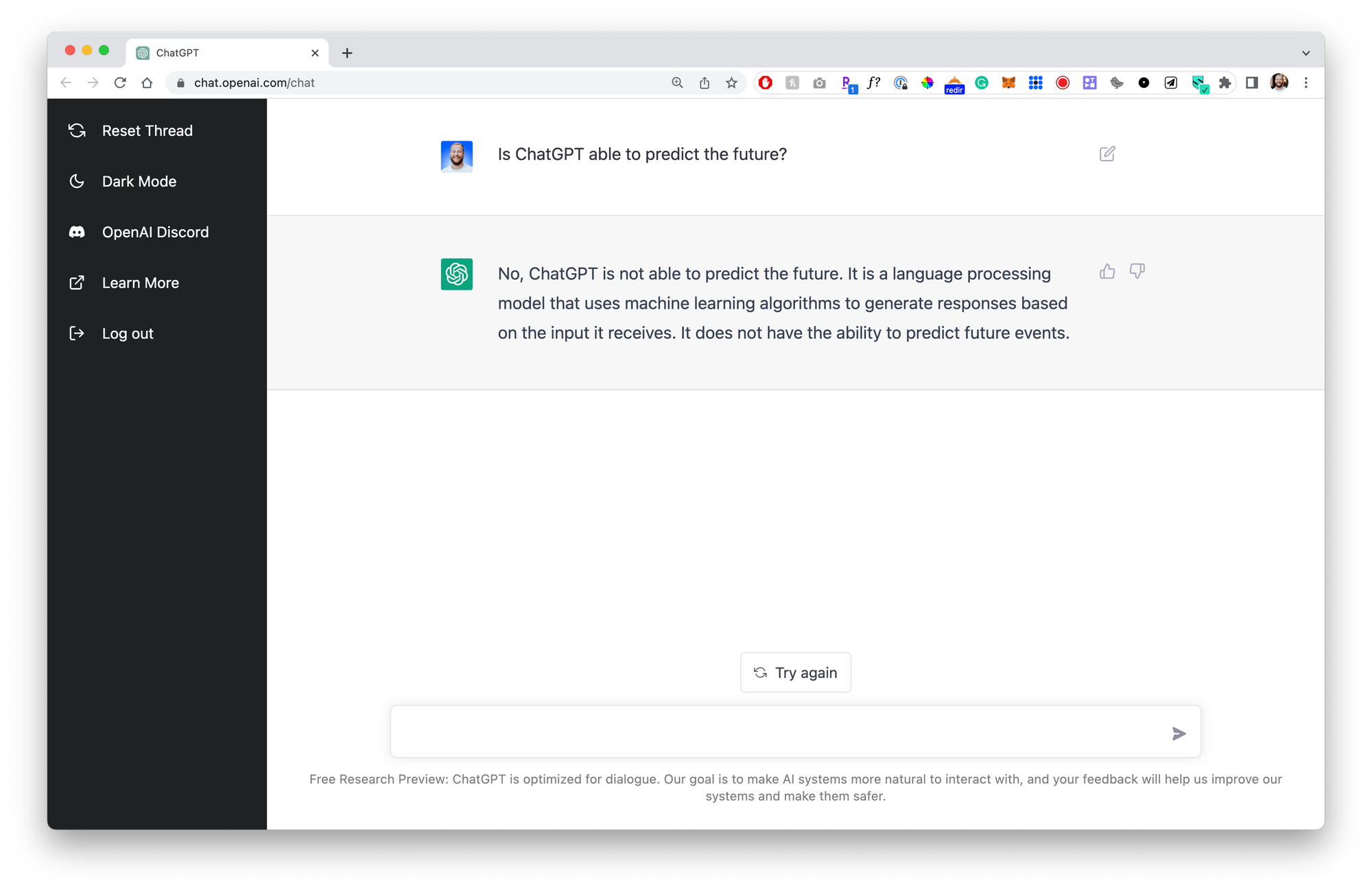

And in that world, it can create responses based on all the available information, but it still can't predict the future...right?

Let's ask:

No, ChatGPT is not able to predict the future. It is a language processing model that uses machine learning algorithms to generate responses based on the input it receives. It does not have the ability to predict future events.

Ok great.

Now, I do believe people will find creative ways to prompt ChatGPT into making what appear to be "predictions" based on the information it's been fed. Here's an example from a couple of days ago that predicts the next 5 years in the USA.

And maybe those predictions are well-informed!

But I believe it will be a long time before we trust an AI's opinions, predictions, and "point of view" over those of a human.

That's our opportunity.

Our businesses as creators are built on the trust and interest that people have in our perspective. Your audience trusts that you understand their needs and use a nuanced filter to contextualize information for them.

And as social creatures, I think we WANT to trust in people. I don't see that going away – even if humans' ability to independently transfer knowledge becomes objectively "inferior."

I will choose a human’s perspective over AI-generated perspective any time.

— Jay Clouse (@jayclouse) December 2, 2022

I’m sure I could be fooled…

But if I find out I was fooled, you’ve lost me as a fan — maybe forever.

Proceed with caution in your use (and disclosure).

We are human. We are vain and we are arrogant. We are likely to continue to trust in humans more than machines (just look at how negatively people generally respond to fully self-driving cars)!

The likely reality is that, at some point in the future, creators adopt these technologies as some part of their content creation process. It's an input into the system that gets manipulated or inspires the output.

In the immediate term, we'll probably see some really direct and sketchy uses of these tools to create a TON of content quickly. A lot of content is bad already and having a program create equal or slightly better content will easily go unnoticed.

But transparent disclosure of how, when, and why you're using these tools will become important to protect the trust of your audience.

Because if your audience trusts that they are receiving YOUR perspective, you don't want to bait and switch them. If you use AI to create content and then simply share that with minimal or no changes, where is your perspective?

Not only does that feel like a betrayal of trust, but it's not a smart long-term strategy. In a world where this technology is available to anyone, how would your use of it stand out?

Your advantage is your unique point of view.

That's what people want from YOU.

So you can spend your time playing with ChatGPT or you can spend your time honing your point of view (hint: base it on your earned insight).

Make it unique.

Make it spiky.

Make it yours.